From Terminator to Skynet

March 14, 2017

Longshaokan (Marshall) Wang, PhD Candidate

March 14, 2017

Longshaokan (Marshall) Wang, PhD Candidate

If you’ve seen the movie Terminator, you’ll probably remember the scary robot who would stop at nothing to kill the protagonist because it was programmed to do so. The mastermind behind the Terminator is Skynet, the Artificial Intelligence (AI) that broke the grip of its human creators and evolved on its own to achieve super-human intelligence. Skynet could think independently and issue commands, whereas Terminator was receiving them. We call the former a self-evolving AI and the latter a deterministic AI. It isn’t difficult to see which one is the smarter AI.

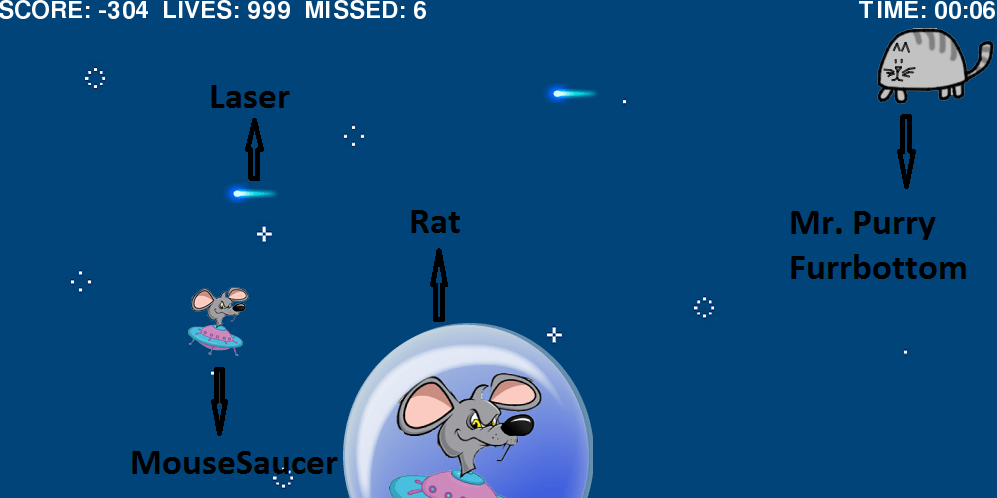

Deterministic AIs are much easier to create. For example, in the video game LaserCat (play it here), you are controlling a cat that shoots lasers at mice. You gain points when you kill a mouse and lose points when you collide with a mouse or let one escape. The amount of points for each kill is proportional to the distance between the cat and the right boundary because it’s harder to react when the cat is away from the right side.

Laser cat

If we build a deterministic AI to control the cat, we can extract the speed of the mice, lasers, and cat, as well as the frequency of lasers. Then, we can move the cat in front of each mouse, as close as possible but without running into it, when the next laser appears. Notice that we are using a lot of prior knowledge about the game including rules and the objects’ mechanics. When building AIs to solve real world problems, it is often difficult to acquire such domain knowledge.

Is it possible then, to build a self-evolving AI that knows nothing about the rules or mechanics but is able to practice and get better on its own based on information on the screen like how a human plays? Such an AI is not science fiction. We can build it with a Reinforcement Learning algorithm. The concept originated from human learning, unsurprisingly. If we receive a reward after performing some action, then that action will be reinforced and we are more likely to perform that action again. For instance, if the food tastes amazing when we try a new recipe, then we are likely to reuse that recipe in the future. Whereas if we receive a penalty/negative reward instead, we are less likely to perform the corresponding action again. A Reinforcement Learning algorithm can make the AI behave in a similar fashion. In the beginning, the AI cat will just explore random actions. If the action leads to colliding with the mouse, it will observe a decrease in scores and will avoid that action in the future. If the action leads to killing a mouse and an increase in scores, it will try to repeat that action. It will even figure out that shooting the mouse farther away from the right boundary is more desirable. Through trial and error and constant updates of what the best action is in each scenario, the AI cat will become smarter and smarter and achieve a super-human level on its own. (Video demo)

Building a self-evolving AI for a video game might not seem very important given that we can build a deterministic AI that performs reasonably well. But as mentioned, in real life it can be very challenging to acquire domain expertise and hand-craft AIs. AIs powered by Reinforcement Learning algorithms, in contrast, can discover optimal strategies that humans may not think of. This is evidenced by AlphaGo, the AI trained by playing the game Go with itself. It eventually was able to beat a human world champion. Reinforcement Learning allows us to move from building Terminator to building Skynet. We can only hope that the AIs will still remain friendly after their evolutions.

Marshall is a PhD Candidate whose research focuses on artificial intelligence, machine learning, and sufficient dimension reduction. We thought this posting was a great excuse to get to know a little more about him so we we asked him a few questions!

What do you find most interesting/compelling about your research?

Reinforcement learning and deep learning have very broad applications. I can be designing an algorithm to play video games today, and using the algorithm to find cures for cancer the next.

What do you see are the biggest or most pressing challenges in your research area?

There aren’t enough statisticians dedicated to AI research, even though building AIs can involve regression, classification, optimization, model selection, and other statistical topics. Right now it seems that computer scientists are taking most of the cake.

What is your deepest darkest fear? Please answer in the form of a haiku.

Nova of nuke

blossoms from alien ship–

July Fourth